Deep Learning Hardware Deep Dive – RTX 3090, RTX 3080, and RTX 3070

Check out the discussion on Reddit 288 upvotes, 95 comments

Check out the discussion on Reddit 288 upvotes, 95 comments

Published on by Michael Balaban

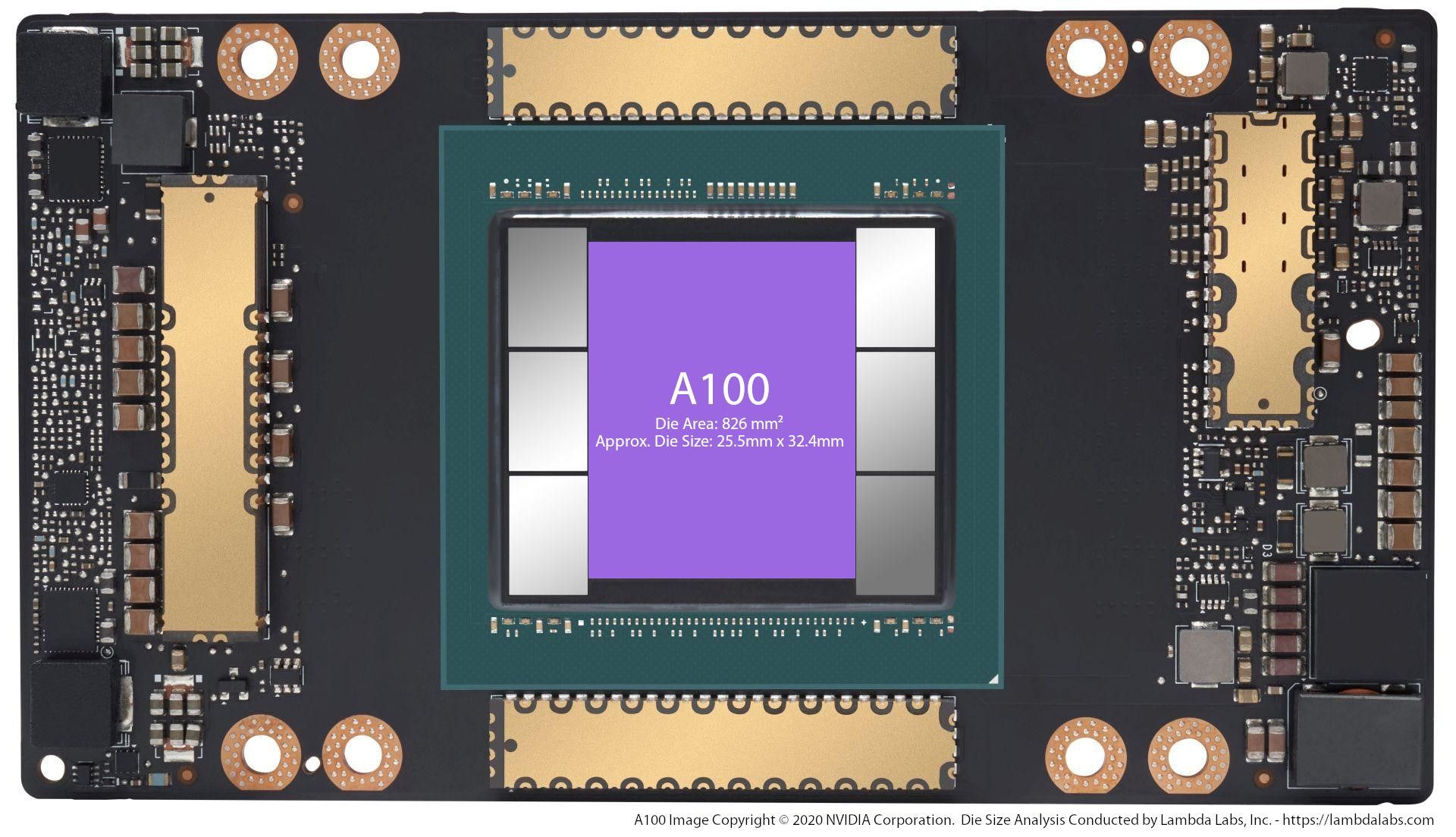

Lambda customers are starting to ask about the new NVIDIA A100 GPU and our Hyperplane A100 server. The A100 will likely see the large gains on models like ...

Published on by Stephen Balaban

State-of-the-art (SOTA) deep learning models have massive memory footprints. Many GPUs don't have enough VRAM to train them. In this post, we determine which ...

Published on by Michael Balaban

Create a cloud account instantly to spin up GPUs today or contact us to secure a long-term contract for thousands of GPUs