Get Into The ARMs Race: Future-Proof Your Workloads Now With Lambda

Blackwell is coming… so is ARM computing 2025 is just around the corner, and with it comes the highly anticipated launch of NVIDIA's revolutionary Blackwell ...

Blackwell is coming… so is ARM computing 2025 is just around the corner, and with it comes the highly anticipated launch of NVIDIA's revolutionary Blackwell ...

Published on by Thomas Bordes

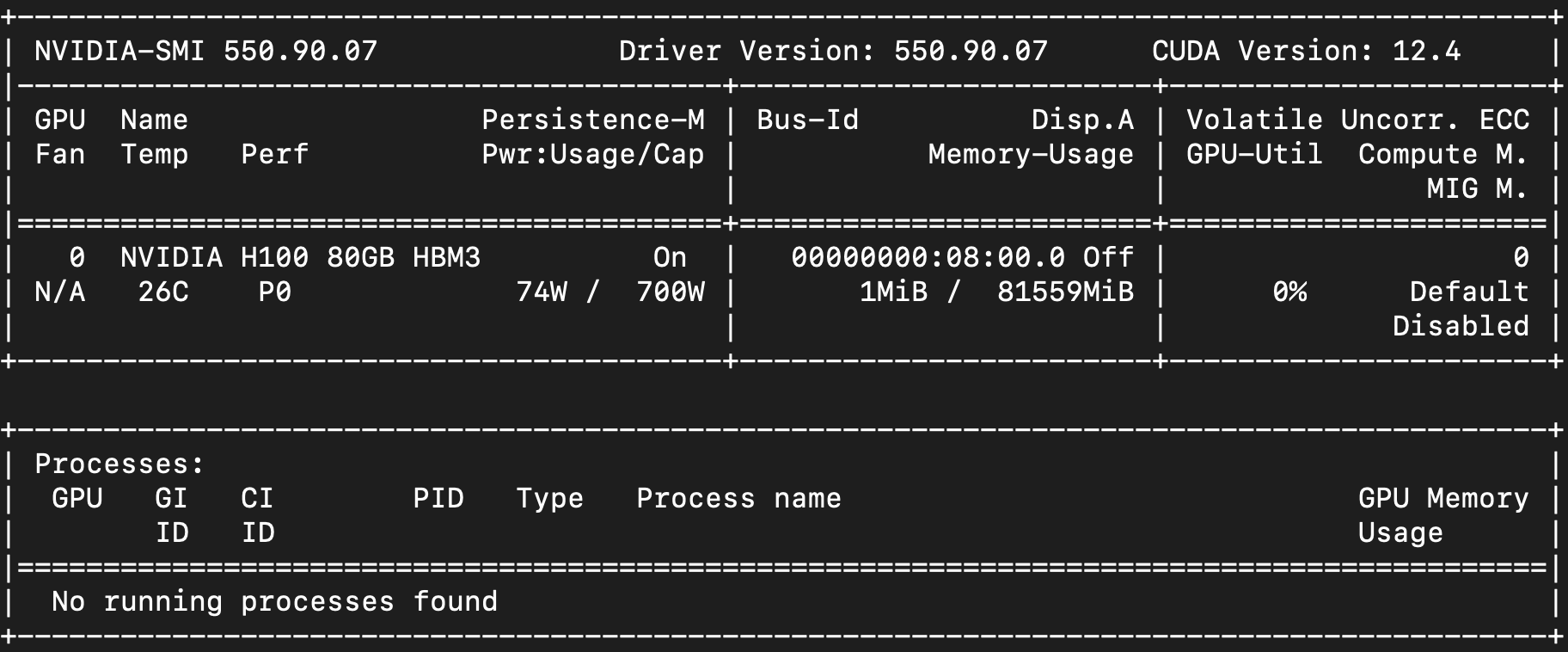

Opening up options: higher-end GPUs in smaller chunks We're excited to announce the launch of new 1x, 2x, and 4x NVIDIA H100 SXM Tensor Core GPU instances in ...

Published on by Mitesh Agrawal

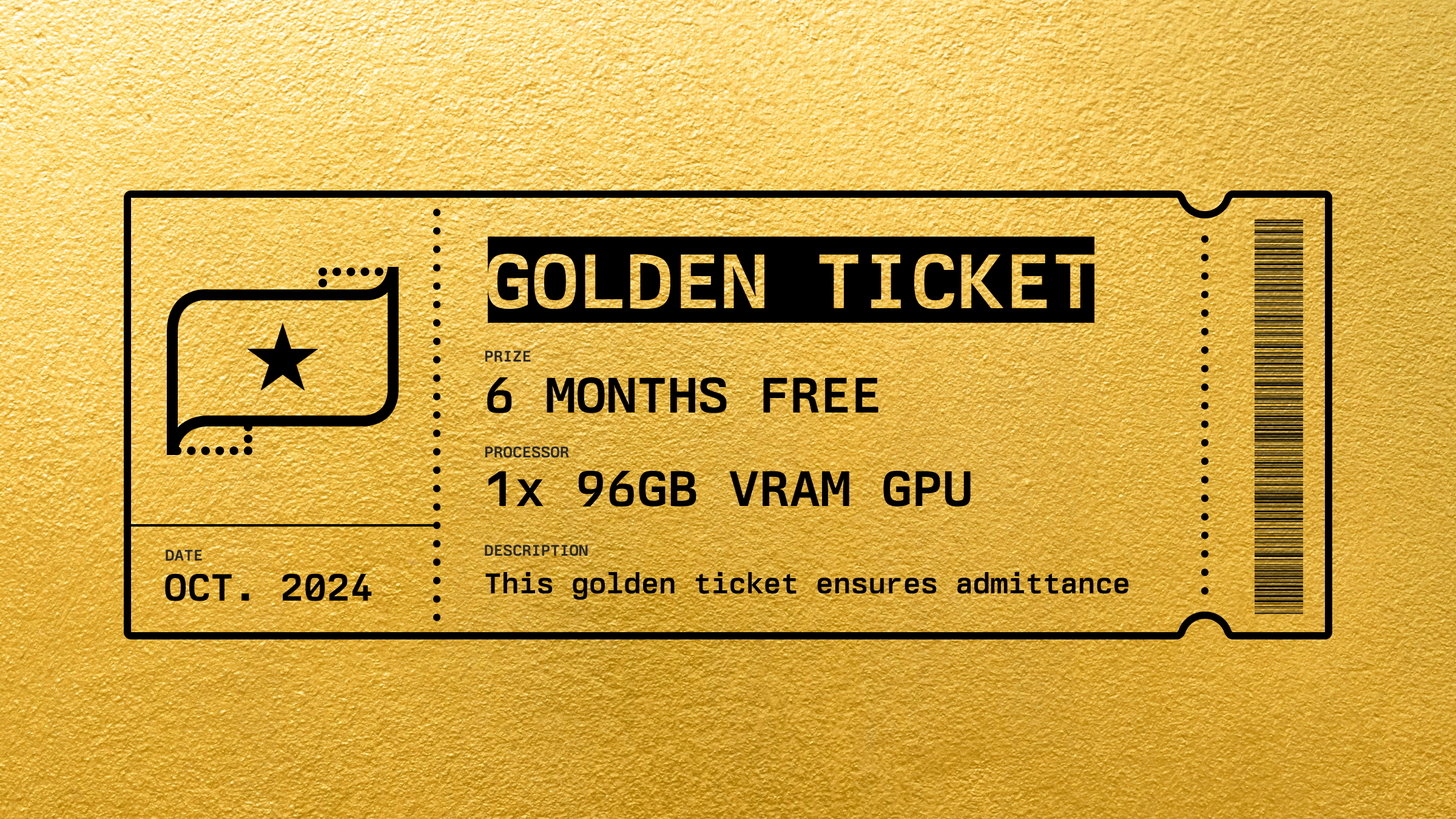

A Golden Ticket to an extraordinary prize We’re excited to introduce Lambda’s Golden Ticket prize draw, offering you and your team the chance to win full-time ...

Published on by Robert Brooks IV

Create a cloud account instantly to spin up GPUs today or contact us to secure a long-term contract for thousands of GPUs