Lambda Raises $480M to Expand AI Cloud Platform

Artificial intelligence is restructuring the global economy, redefining how humans interact with computers, and accelerating scientific progress. AI is the ...

Artificial intelligence is restructuring the global economy, redefining how humans interact with computers, and accelerating scientific progress. AI is the ...

Published on by Stephen Balaban

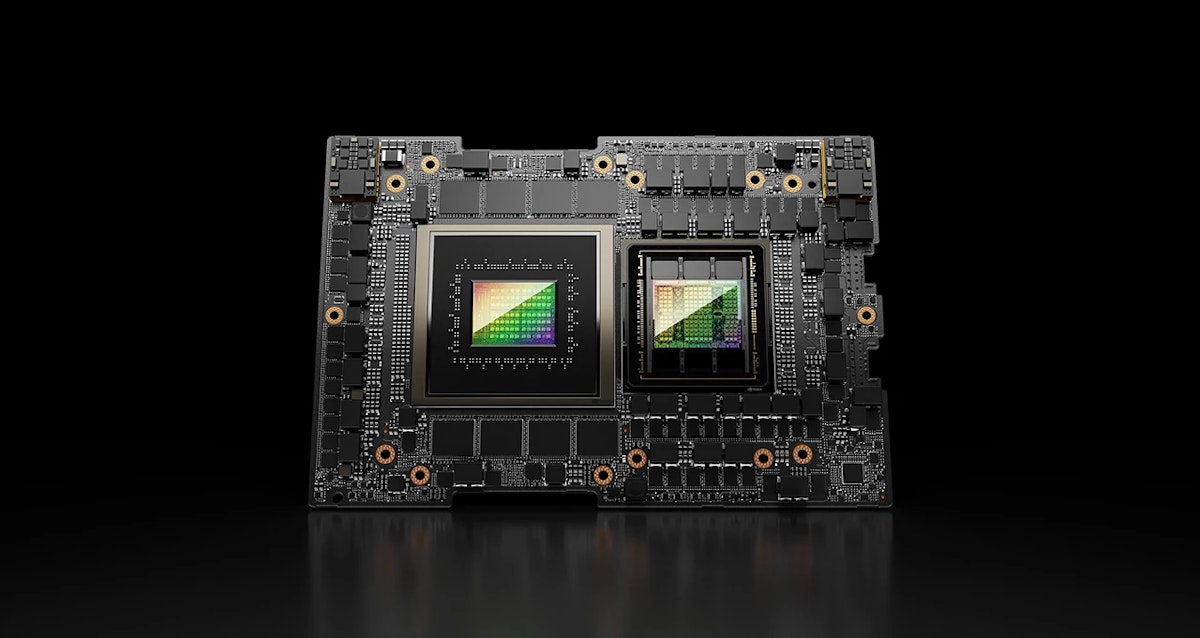

The NVIDIA GH200 Grace Hopper™ Superchip is a unique and interesting offering within NVIDIA’s datacenter hardware lineup. The NVIDIA Grace Hopper architecture ...

Published on by Baseten

A list of Lambda launches this year Feb 24, 2025 - Service Credits. This allows you to view available service credits. The balance updates when credits are ...

Published on by Doug Pan

Create a cloud account instantly to spin up GPUs today or contact us to secure a long-term contract for thousands of GPUs